An ML model consists of a set of weights (or a set of numerical values) that transform inputs to outputs (along with a nonlinear transform such as a sigmoid function). The weights are often organized as vectors or matrices. Consider neural networks, decision trees and support vector machines as types of ML models for this discussion.

The weights representing features of the data (input or intermediate data) are also called feature vectors or vectors. They are also called embeddings, that is embeddings of vectors in a vector space. We discussed such vectors in https://securemachinery.com/2019/05/24/transformer-gpt-2/.

The term “embedding” comes from the idea that the vectors “embed” the original data into a lower-dimensional space. The embedding process involves a combination of statistical and computational techniques, such as factorization and neural networks, that learn to map the input data into the vector space in a way that preserves the relevant properties of the original data.

The use of vectors to represent words in machine learning research started in 2013 with the publication of the paper “Distributed Representations of Words and Phrases and their Compositionality” by Tomas Mikolov et al. This paper introduced the word2vec algorithm, which generates dense vector representations of words based on their distributional properties in a large corpus of text. The size of the vector or embedding in a word embedding model is a hyperparameter that needs to be determined before training the model. It is typically chosen based on the size of the vocabulary and the complexity of the task at hand. In practice, the vector size is often set to be between 100 and 300 dimensions, but this can vary depending on the specific application and the available computational resources. The optimal vector size can be determined through experimentation and tuning of hyperparameters.

One difference between embeddings and feature vectors is that embeddings are typically learned automatically from the data, while feature vectors are typically chosen based on domain knowledge or feature engineering. However these two terms are often used interchangeably. Here is a video going over how the embeddings are obtained from words in a sentence with a bag of words approach- https://www.youtube.com/watch?v=viZrOnJclY0 .

Pinecone, Milvus, Facebook AI Similarity Search (FAISS), Google Vertex Matching engine are examples of Vector databases.

The challenge in implementing a vector database is that traditional databases are not optimized for handling high-dimensional vector data, which is often used in machine learning and data science applications.

Vector data is typically represented as arrays of numbers, where each number represents a feature or attribute of the data. For example, an image might be represented as a high-dimensional vector where each dimension represents the color value of a specific pixel. In contrast to traditional databases, where each record consists of a set of fields or columns, vector databases need to store and index large volumes of high-dimensional data in a way that supports efficient similarity search.

In traditional databases, queries are typically based on simple comparisons of scalar values, such as equality or range queries. However, in vector databases, similarity search is the primary operation, which requires specialized algorithms and data structures to efficiently compute the similarity between vectors. These algorithms are designed to handle high-dimensional data and minimize the amount of computation needed to compare vectors, which can be computationally expensive.

There are several specialized algorithms that are commonly used in vector databases to support efficient similarity search. Here are some examples:

- Euclidean Distance: This is a distance metric that measures the straight-line distance between two points in Euclidean space. It is commonly used in vector databases to compute the distance or similarity between vectors.

- Cosine Similarity: This is a similarity metric that measures the cosine of the angle between two vectors. It is commonly used in text-based applications to measure the similarity between documents or word embeddings.

- Locality-Sensitive Hashing (LSH): This is a technique used to hash high-dimensional vectors into lower-dimensional buckets based on their similarity. It is commonly used in vector databases to speed up similarity search by reducing the number of comparisons needed to find similar vectors.

- Product Quantization: This is a technique used to divide high-dimensional vectors into smaller subvectors and quantize them separately. It is commonly used in vector databases to reduce the dimensionality of the data and speed up similarity search.

- Inverted Indexing: This is a technique used to index the vectors based on the values of their individual dimensions. It is commonly used in text-based applications to speed up search queries by indexing the terms in the document.

Pinecone provides several indexing and search algorithms, including approximate nearest neighbor search, that are selected automatically based on the properties of the data and the search requirements. However, you can also specify a specific algorithm or tuning parameters when creating an index or performing a query by passing in the appropriate arguments. For example, you can use the method parameter when creating an index to specify the indexing method, or the distance parameter when performing a query to specify the distance metric to use.

While OpenSearch is not specifically designed as a vector database like Pinecone, it provides vector search capabilities through its support for nearest neighbor search. OpenSearch uses the K-Nearest Neighbor (K-NN) algorithm to perform nearest neighbor search for vector data. K-NN is a machine learning algorithm that can be used to find the K nearest neighbors of a query vector in a high-dimensional space. OpenSearch also provides support for approximate nearest neighbor search using algorithms such as Annoy and Hnswlib. To use vector search in OpenSearch, you first need to index your vector data using the appropriate data type (e.g., float or double). You can then perform a nearest neighbor search by specifying the query vector and the number of nearest neighbors to return. OpenSearch also provides support for vector scoring, which allows you to rank search results based on their similarity to a query vector. You can use vector scoring to boost or filter search results based on their similarity to a query vector.

What kind of vectorization schemes are useful for log processing ?

When processing log data, the goal is typically to extract useful information from the log entries and transform them into a format that can be easily analyzed and searched. Vectorization is a common technique used for this purpose, and there are several vectorization schemes that are applicable to log processing. Here are some examples:

- Bag-of-words: This is a vectorization scheme that represents a document as a bag of words, where each word is represented by a dimension in the vector and the value of the dimension is the frequency of the word in the document. Bag-of-words can be used to represent log entries as a vector of words, which can be used for tasks such as text classification and anomaly detection.

- TF-IDF: This is a vectorization scheme that represents a document as a weighted combination of its term frequency and inverse document frequency. TF-IDF can be used to represent log entries as a vector of weighted words, which can be used for tasks such as information retrieval and text mining.

- Word embeddings: This is a vectorization scheme that represents words as dense vectors in a high-dimensional space, where the distance between vectors reflects the semantic similarity between the words. Word embeddings can be used to represent log entries as a vector of word embeddings, which can be used for tasks such as text classification and entity recognition.

- Sequence embeddings: This is a vectorization scheme that represents a sequence of words as a dense vector in a high-dimensional space, where the distance between vectors reflects the similarity between the sequences. Sequence embeddings can be used to represent log entries as a vector of sequence embeddings, which can be used for tasks such as sequence classification and anomaly detection.

- One-hot encoding: This is a vectorization scheme that represents categorical data as binary vectors, where each dimension corresponds to a possible category and the value of the dimension is 1 if the data belongs to that category and 0 otherwise. One-hot encoding can be used to represent log entries as a vector of categorical features, which can be used for tasks such as classification and clustering.

By using a suitable vectorization scheme, log data can be transformed into a format that can be easily analyzed and searched, enabling tasks such as anomaly detection, root cause analysis, and performance optimization.

Vector database versus Feature store – what’s the difference ?

Both vector databases and feature stores are used to manage and serve high-dimensional data, such as embeddings, vectors, and other numerical representations, but there are some key differences between the two.

A vector database is a database optimized for storing and querying high-dimensional vector data. It provides efficient indexing and search algorithms, such as approximate nearest neighbor search, that allow for fast and scalable similarity search. Vector databases are commonly used in machine learning applications, such as recommendation systems and natural language processing, where the goal is to find similar items or entities based on their vector representations.

A feature store, on the other hand, is a centralized repository for machine learning features that provides a way to store, manage, and share feature data across different applications and teams. It is designed to help data scientists and machine learning engineers build, test, and deploy machine learning models more efficiently by providing a unified interface for accessing and managing features.

While both vector databases and feature stores can store and serve high-dimensional data, the main difference is their focus and use case. Vector databases are designed for efficient similarity search, while feature stores are designed for feature management and sharing across different applications and teams. In practice, they can complement each other in many machine learning workflows, with the vector database providing the efficient similarity search capabilities and the feature store providing a centralized and standardized way to manage and share feature data.

Comparison of Milvus Pinecone Vespa Weaviate Vald GSI Qdrant – https://towardsdatascience.com/milvus-pinecone-vespa-weaviate-vald-gsi-what-unites-these-buzz-words-and-what-makes-each-9c65a3bd0696

Anyscale – Using an embeddings database to train an LLM using Ray – https://www.anyscale.com/blog/llm-open-source-search-engine-langchain-ray

OpenAI embeddings example – https://github.com/openai/openai-cookbook/blob/main/examples/Question_answering_using_embeddings.ipynb

HuggingFace sentence embeddings article – https://medium.com/huggingface/universal-word-sentence-embeddings-ce48ddc8fc3a

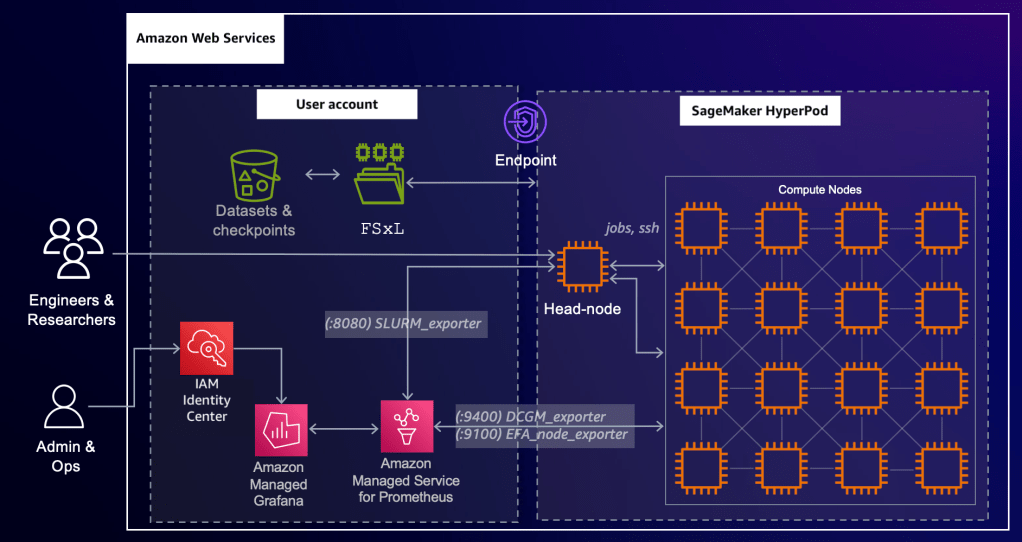

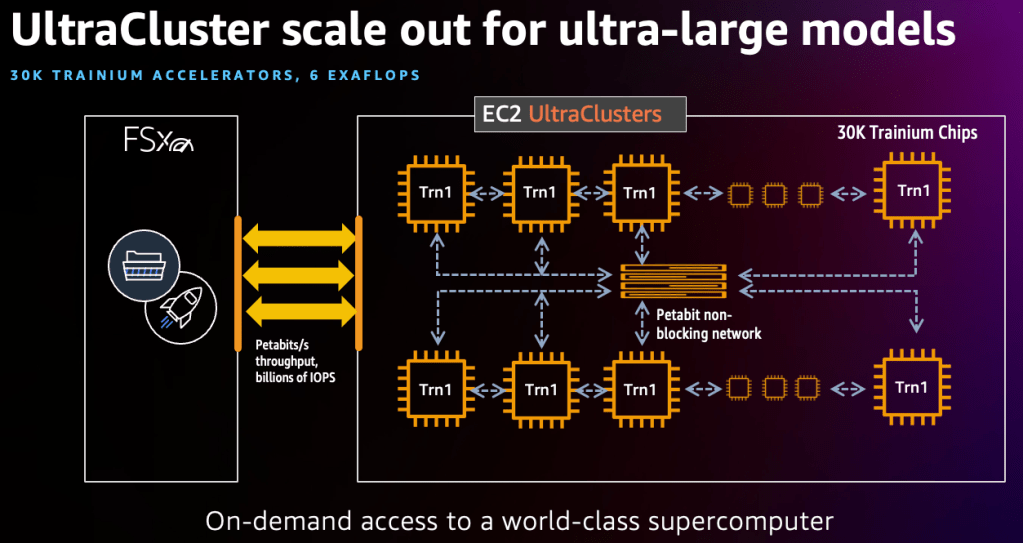

AWS – https://medium.com/@shankar.arunp/augmenting-large-language-models-with-verified-information-sources-leveraging-aws-sagemaker-and-f6be17fb10a8