The Hessian matrices—the second derivatives of the loss function with respect to weights have a pecular organization. For the past twenty years, researchers noticed that these massive matrices are almost entirely concentrated in blocks along the diagonal. This is like a filing cabinet where all the important stuff sits in labeled drawers and the drawers barely talk to each other.

A new paper by Dong, Zhang, Yao, and Sun offers an explaination of why this happens, and their answer is : it’s about the number of classes in your classification problem, not the math of cross-entropy loss as believed earlier. This carries practical consequences for how we train large language models.

Back in 2004, Ronan Collobert noticed something odd while analyzing neural network optimization. When he looked at the Hessian matrix—the landscape of curvature in your loss function—it had a block structure. The diagonal blocks (where parameters interact with themselves) were huge, but the off-diagonal blocks (where different parameter groups interact) were tiny. He proposed an explanation: the cross-entropy loss function creates this through a term called p(1−p), which goes to zero as training progresses.

Here’s why it didn’t make sense, though nobody noticed: if cross-entropy loss was the culprit, why did the block structure show up before any training even happened? The network could be randomly initialized, weights completely random, and boom—already block-diagonal. You haven’t updated a single weight yet. Also, if you used a different loss function (like mean squared error), you still got block structure with multiple classes, so it wasn’t actually about cross-entropy at all.

The real story is more elegant: the block structure emerges directly from how many classes your problem has. With just two classes, forget it—no block structure. With a thousand classes, you get weak structure. With 32,000 classes like Llama 2’s vocabulary, you get basically perfect block-diagonal structure before you’ve even looked at your first data point.

How the Softmax Creates Block Structure (Even at Initialization)

Let’s walk you through the math, because once you see it, it clicks.

Imagine a linear classifier. You have weights V (one row per class), input data xn, and you want to predict which of C classes is correct. The softmax gives you:pn,c=∑j=1Cexp(vjTxn)exp(vcTxn)

This is a probability distribution—all the pn,c values sum to one. When weights are randomly initialized with standard initialization (like He or Xavier), something magical happens: because no class has seen any data yet, each class gets approximately equal probability. For 100 classes, pn,c≈1/100 for each class. For 1,000 classes, pn,c≈1/1000. For 32,000 classes, pn,c≈1/32000.

Now here’s where it gets interesting. The loss function measures how badly you predicted the correct class:L=−N1n=1∑Nlogpn,yn

To understand the shape of this loss—its curvature in different directions—you compute second derivatives. This is the Hessian.

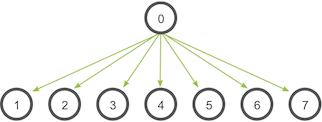

When you compute the second derivative with respect to class i‘s parameters, something different happens depending on whether you’re looking at the diagonal blocks (interactions of class i with itself) versus off-diagonal blocks (interactions of class i with class j):

Diagonal blocks (class i with itself):Hii=N1n=1∑Npn,i(1−pn,i)xnxnT

Notice the coefficient: pn,i(1−pn,i). This has two parts. The first, pn,i, tells you how often we predict class i. The second, (1−pn,i), tells you how much “room” there is to change that prediction. When pn,i=1/C, this product is approximately 1/C.

Off-diagonal blocks (class i interacting with class j, where i=j):Hij=−N1n=1∑Npn,ipn,jxnxnT

Now the coefficient is pn,ipn,j—both probabilities multiplied together. When both equal 1/C, this product is 1/C2, which is much smaller than 1/C.

This is the key: the same data term xnxnT appears in both, but the coefficients differ. The diagonal gets a factor of 1/C while the off-diagonals get 1/C2. So when you measure the total size of each block using the Frobenius norm (which is like taking the square root of the sum of all squared entries):∥Hii∥F∥Hij∥F≈1/C1/C2=C1

With C=100, the off-diagonal is 1% the size of the diagonal. With C=32,000, it’s 0.003% the size. The matrix is essentially block-diagonal.

But why does softmax create this difference? The answer is in the softmax derivative itself. When you change class i‘s parameters, you don’t just change pn,i—you change all the probabilities, because they must sum to one. This coupling is where the p(1−p) term comes from for the diagonal (the “self-coupling” of class i), and the −pq cross-term comes from for off-diagonals (how increasing one probability forces others down).

Why This Was Misunderstood for So Long

Collobert saw that cross-entropy had this special p(1−p) term and thought “aha! That must be why.” But he missed two critical facts:

First, the p(1−p) term appears in both the diagonal and off-diagonal parts of the Hessian—it’s not special to the diagonal. What’s special is that the ratio between them depends on C, not on properties of the loss function.

Second, and this is the killer blow: Collobert only tested small numbers of classes. He’d use cross-entropy with multi-class problems (where he saw block structure) and compare to mean squared error with binary classification (where he didn’t). He was comparing apples to oranges—he was varying both the loss and the number of classes simultaneously, so of course he couldn’t figure out which one mattered. It turns out the number of classes is all that matters.

This is why the new paper’s insight is so satisfying. It reveals that you can take the simplest possible loss (just raw squared error), with the simplest architecture (linear!), and still get block-diagonal structure if C is large. You don’t need any special properties of cross-entropy. You don’t need training to happen. You just need lots of classes.

Before Training Even Starts

This deserves emphasis because it’s genuinely counterintuitive: the Hessian at random initialization is already block-diagonal.

What does this mean in practice? Imagine you initialize a neural network for ImageNet (1,000 classes) with random weights. Before you’ve seen a single data point, before you’ve computed a single gradient, before you’ve updated a single weight: if you were to compute the Hessian matrix of your loss function at those random weights, it would be block-diagonal.

How? Well, the loss function is completely defined. You have a loss that depends on your current weights. The loss is high (your random network predicts garbage), but it’s defined. The second derivative of that loss with respect to your weights is well-defined too. And because of the softmax’s behavior with uniform probabilities, that Hessian has block structure.

This is what the paper means by the “static force”—a force that exists due to architecture, not due to training. The architecture says “we have 1,000 classes” and the softmax probabilites immediately become p≈1/1000, and boom, block structure emerges.

Later, during training, another force emerges—the “dynamic force.” As the network learns, the probabilities become less uniform (maybe it’s very confident the image is a dog), and cross-layer interactions evolve. But by then, the block-diagonal foundation is already there.

What This Reveals About Optimizers

This discovery has concrete implications for how we optimize neural networks, especially large language models.

Modern LLMs like Llama 3 have vocabularies of 128,000 words. That’s 128,000 “classes” in a sense—your model needs to predict which word comes next. According to the theory, this means the Hessian is extraordinarily block-diagonal. In fact, with C=128,000, the off-diagonal blocks are literally 1/128,000 the size of the diagonal blocks.

This is why Adam works so well for LLM training. Adam is a clever optimizer that uses a diagonal approximation of the Hessian:θt+1=θt−αvt+ϵmt

What’s vt? It’s an estimate of diag(H)—just the diagonal of the Hessian, ignoring everything else.

For a fully dense Hessian, ignoring 99% of the matrix would be terrible. But when the Hessian is block-diagonal, ignoring off-diagonal blocks is almost free. You’re throwing away information that barely matters anyway. This is why Adam suddenly becomes effective for LLMs compared to standard gradient descent.

But here’s where it gets better: researchers at Princeton recently realized you can do even better. If the Hessian is block-diagonal, you don’t need the full diagonal—you just need a diagonal per block. This led to Adam-mini, which reduces memory usage by 50% while maintaining the same training quality. Instead of storing diagonal second moments for every single parameter, you compute one second moment per block.

Then there’s Muon, a newer optimizer that goes further. Muon essentially applies a Newton-like update (using inverse Hessian-like information) but only within each block:W←W−αHii−1∇WL

This works remarkably well for training transformers because each weight matrix has approximately independent curvature. The block-diagonal structure means you’re not ignoring important cross-layer interactions—there basically aren’t any (they’re O(1/C) times smaller).

The Theoretical Foundation: Random Matrix Theory

The paper proves these results rigorously using techniques from random matrix theory, a branch of mathematics that studies the statistics of large random matrices. The key tool is the Marchenko-Pastur law, which describes how eigenvalues are distributed in sample covariance matrices.

Why is this relevant? Because the Hessian can be written as:H=N1n=1∑Nwn,ixnxnT

This looks like a weighted sum of outer products—very similar to a covariance matrix. The weights wn,i depend on the softmax probabilities. The theorem shows that as you have more and more classes, and as the dimension and sample size both grow large, the eigenvalues of these blocks follow a well-known distribution.

To prove this with dependent data (the weights depend on the inputs), the authors use a technique called Lindeberg interpolation. The idea is elegant: you smoothly morph between the actual problem (where weights depend on inputs) and an idealized version (where they don’t), showing that the difference vanishes as you go to infinity. This lets you apply classical random matrix results to a case where they shouldn’t technically apply.

Impact on Understanding Neural Network Optimization

This work changes how we think about several things:

First, it unifies our understanding. Previously, block-diagonal structure seemed mysterious or specific to certain losses. Now we see it as a fundamental consequence of having many classes. This is universal—it applies whether you’re using cross-entropy, mean squared error, or any other loss. The class structure is what matters.

Second, it explains why certain optimizers work. Adam, Muon, and block-diagonal preconditioners aren’t magical—they’re just exploiting an underlying property of the loss landscape that’s been there all along, especially for large C. This gives confidence that these methods aren’t empirical accidents but are grounded in geometry.

Third, it suggests new optimizers. If you understand the Hessian’s structure, you can design optimizers that respect that structure. You might use different learning rates for different blocks, or apply block-wise preconditioning, or use Newton-like updates within blocks while keeping first-order updates for cross-block terms.

Fourth, it reveals scalability properties. As C increases (larger vocabularies, more classes), the block structure gets stronger, and diagonal approximations get better. This suggests that bigger problems might actually be easier to optimize in some sense, because the Hessian becomes simpler.

Practical Takeaway for Builders

If you’re building or training large language models, here’s what matters:

Your optimizer choice isn’t arbitrary. Adam works well because your Hessian (determined by your vocabulary size, which is huge) is approximately block-diagonal. More sophisticated second-order methods like Muon work even better because they exploit this structure directly.

If you were training a 10-class image classifier, you might not see much benefit from these structure-aware optimizers—the Hessian isn’t that block-diagonal. But for an LLM with a 100,000-word vocabulary? The structure is so strong that any optimizer ignoring it is leaving performance on the table.

The deeper insight is that the problem size and the problem structure are linked. As you scale up (more classes, bigger vocabularies), you’re not just making the problem bigger—you’re changing its fundamental geometry in ways that make it easier to solve with the right algorithms.

And now, thanks to this paper, we know exactly what that geometry is: a collection of nearly independent blocks, connected only by infinitesimal threads, waiting to be exploited.